The death of the two-week sprint

The AI-Native software development lifecycle demands a change

For decades, the 2-week sprint cycle was the "sweet spot" of the Software Development Lifecycle (SDLC) for many engineering teams. It was:

- Long enough for teams to make meaningful progress

- Frequent enough to make timely course corrections

- Short enough to maintain focus on the sprint goal

Today, it is not uncommon to see teams releasing almost every day.

The cadence is no longer weekly, it is daily.

Not just for the Cursor or my team. The same experience was shared by the majority of the 35+ Engineering Managers I interviewed (I will quote some of their learning later in this article). With AI adoption, we are able to ship more value within the two-week sprint duration, and for most of us it resulted in more frequent releases.

Some of us fixed bugs the same week when the users reported them. Why wait for two weeks when you can move faster!

After talking to so many Engineering Managers, and feeling like I have now covered my blind spots, I sat down to answer three questions

- What did really change in software development after the release of LLMs that were extremely powerful for coding tasks?

- What Software Development Lifecycle model works the best for AI-native software development?

- What are the key takeaways for the Engineering Managers?

Changes in Software Development Lifecycle (SDLC) after AI

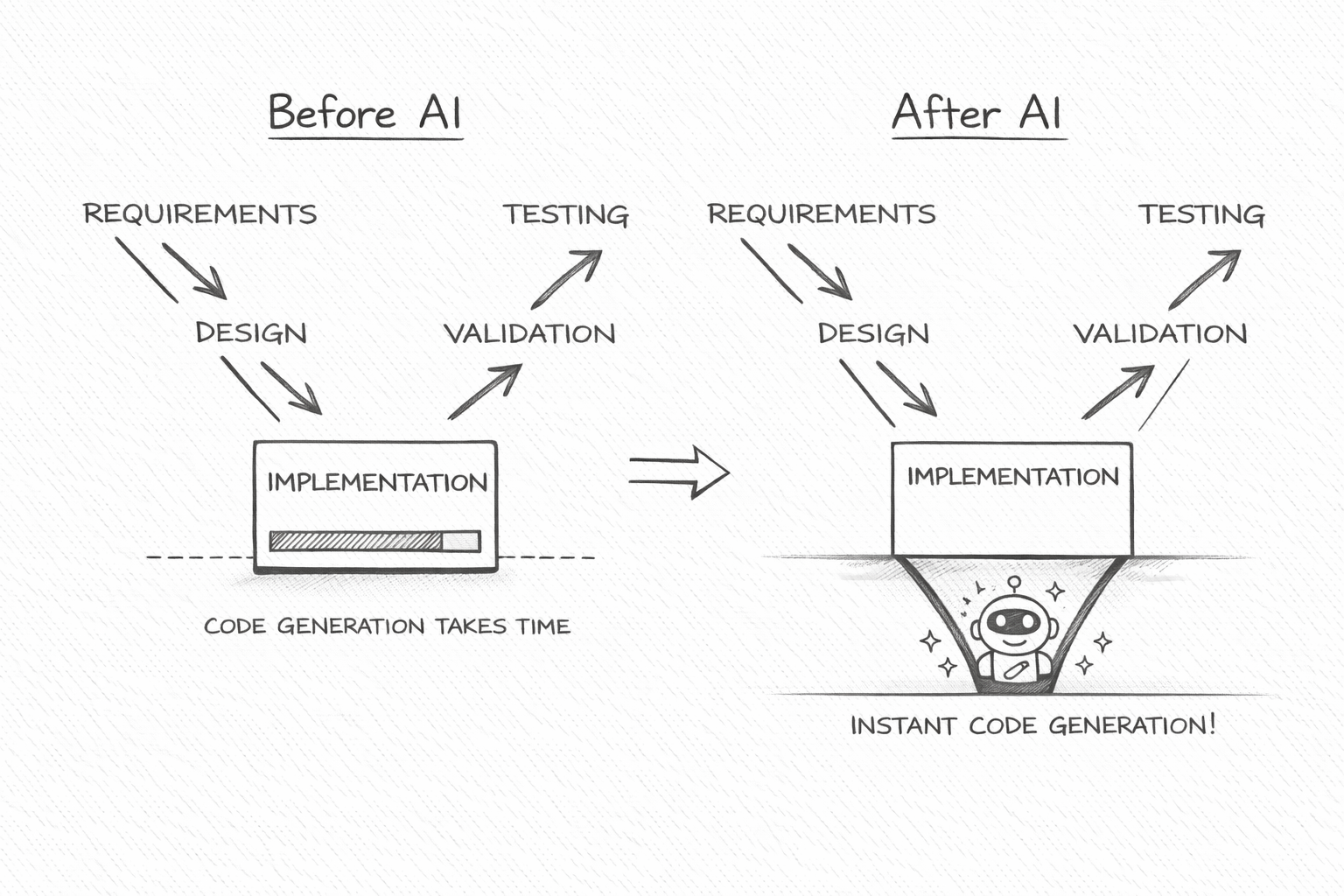

At a high-level, the software development process looks the same as before:

Design -> Implement -> Validate

But, is it really the same?

Implementation, the coding is almost instantaneous now

With AI, the implementation (coding) time has reduced from weeks to days. Earlier, the implementation (coding) used to consume most of the time of the sprint. Now it consumes the lowest time among all three steps (design, implementation, validate).

So as soon as you reach implementation (the bottom of the V shape), the project quickly "bounces" back to the validation phase

Note: I will later cover V-Bounce SDLC model^[1] in more detail later in this article. Remember this "bounce" is the key difference in V-Bounce model from an older SDLC V Model.

And there is much more nuance to notice than just the implementation time.

Natural language is the primary programming language

With AI, the natural language has become the primary programming language. As it is easy to convert spec to code, the codebase is no longer the primary asset to maintain, the specification is.

Humans are now primarily verifiers

AI turned humans from "creators" to "verifiers". AI implements, while humans focus on providing high quality inputs (requirements/context/intent/architecture) and verifying the AI output.

Earlier, the implementation was the bottleneck. The new bottleneck is clarity and verification.

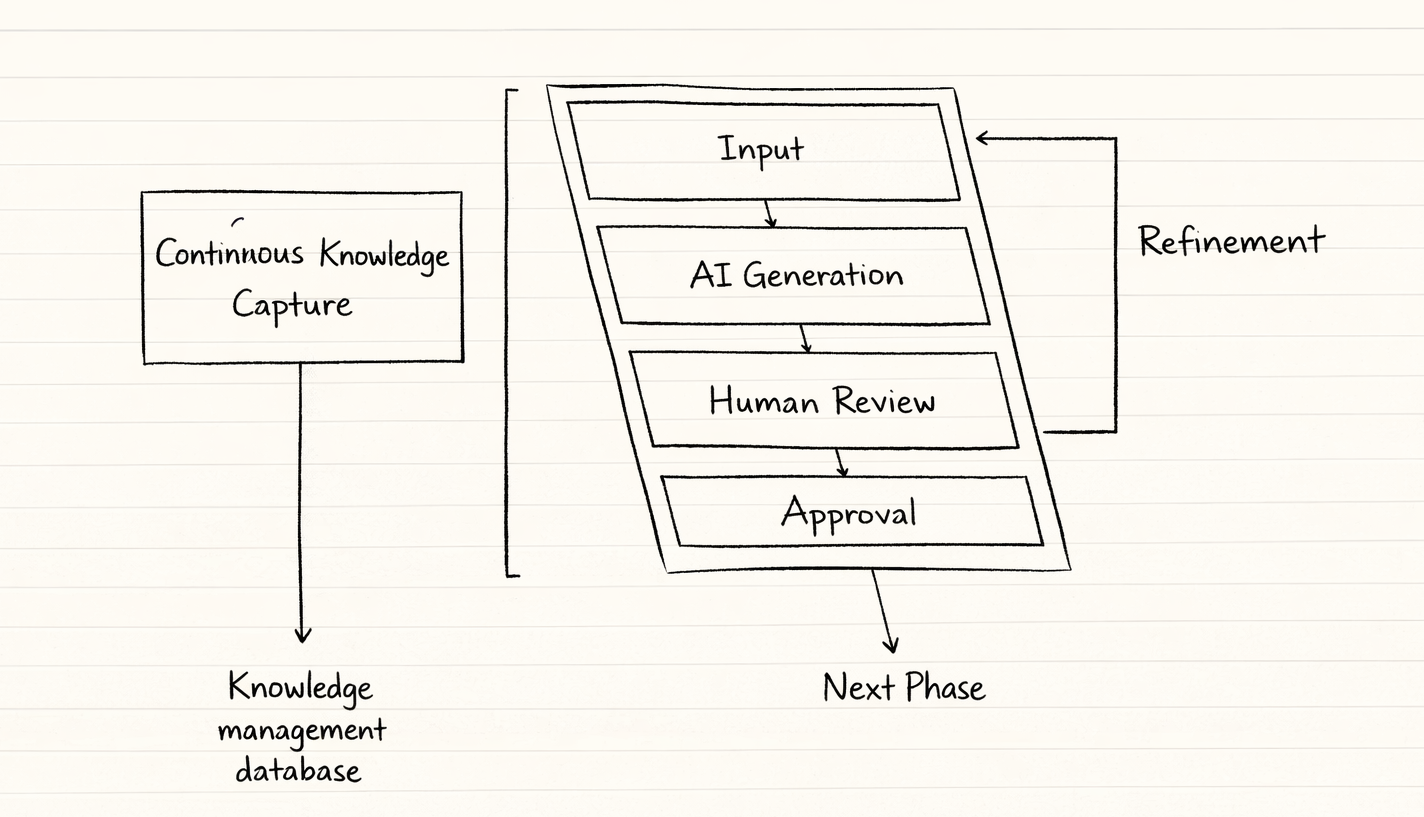

The V-Bounce model

The new V-Bounce model^[1] embraces the new reality and highlights the mind-shift we need in our approach towards SDLC. Most of us have either already adopted it or will soon do so.

Six steps of AI-native development as mentioned the research paper for V-Bounce model:

1. Input: Human provides the requirements in natural language

2. AI Generation: Agents quickly generate PRDs, designs, and code

3. Human Review: The human interrogates the output for logic and intent

4. Refinement: The AI quickly iterates on human feedback in a tight, recursive loop

5. Approval: Human verifies whether the objectives of the current phase are met or not

6. Knowledge Capture: AI automatically captures insights into a comprehensive knowledge graph, preventing future architectural drift with the centralized knowledge management

These steps occur multiple times every day. Two-week agile sprint worked on different assumptions.

So we let go of the old two-week sprint.

What else needs to change? Let me narrow that down as the lessons for engineering managers in the world where AI-native SDLC is the new reality.

Lessons for engineering managers

What changes do engineering managers need to make to optimize the modern AI-native development lifecycle?

I will pick my top lessons for the engineering management for the AI-native software development lifecycle.

1. Requirements, architecture, and design are the critical path

AI writes the code, but only when the quality of these inputs must is extremely high. This was equally important earlier, but the impact is now instantly visible.

Early on, I made the mistake of assuming clarity without verification. As an engineering manager, I greenlit a project where everyone nodded along to the goal, but I never forced alignment on what "done" actually meant.

Recovery came from stopping the project midstream and rewriting the spec together. Not cleaner, also sharper. One owner, one success metric. One explicit tradeoff we were willing to accept. Progress snapped back immediately.

-- Edward Tian, Founder at GPTZero

Why bother when vibe coding works?

Vibe coding is: Prompt -> Hope -> Debug

And yes, it's fast. Frontier models often produce surprisingly good one-shot results. It feels like magic. Great for POCs. Great for exploration.

But stack a few implicit assumptions. Let a few silent divergences accumulate. Suddenly you're debugging decisions you never consciously made.

You might think:

So this is just another reminder that PRDs (Product Requirements Document) and HLDs (High-Level Design) matter?

That's not the complete story.

I asked many Engineering Managers about their experience leading AI-native engineering teams. And I find consensus with what Kush shared:

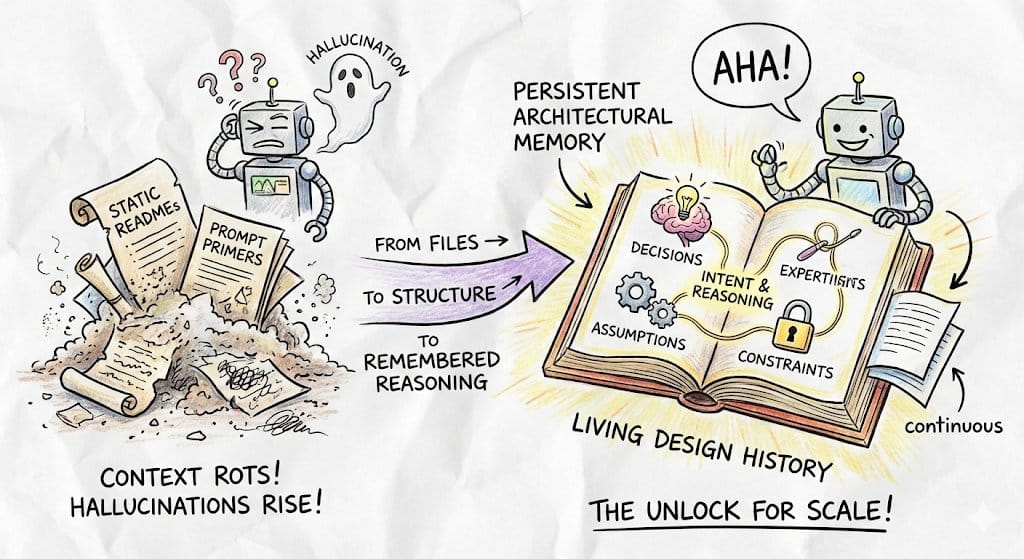

With AI, we were shipping faster than ever, until the progress stalled. READMEs decayed and every new feature required explaining the system from scratch. Restating intent began to cost more than generating code. It was not a tooling flaw, it was the absence of continuous architectural memory. We need systems that track decisions, assumptions, and constraints from Day 0. Not just files, but why the system looks the way it does.

-- Kush Mishra, Founder at Neander.ai (Prev. CTO at Noise and Partner at South Park Commons)

In the AI-native SDLC, requirements and architecture must not be considered documentation overhead. They are the memory layer that prevents velocity from collapsing into the chaos. And drafting the PRDs and HLDs only at the beginning, is not a job done.

We must continuously capture insights into a comprehensive knowledge graph, preventing future architectural drift

Learning for Engineering Managers: Write PRDs and HLDs before you start the code generation. Leverage AI to automate the continuous architecture memory.

2. The specification as the "single source of truth"

Version control specifications alongside code. This will create the trail where every release is linked to specific spec versions and the tests that validated them. In API development, use the formal specification (like OpenAPI) to generate contract tests. This will ensure that the implementation never drifts from the agreed-upon contract, maintaining perfect traceability between the interface design and the backend code.

This is how a spec-driven development in the real-world looks like

At RudderStack, we didn't initially trust AI for production development. But as we refined our AI-native SDLC around spec-driven development, it became indispensable for both speed and quality.

Our process:

1. It starts with a PRD. The engineer collaborates with AI to clarify and produce an HLD. That context is committed to Git for traceability.

2. Frontier models generate an implementation plan. From that plan, Linear tickets are created. Implementation happens in short bursts using cost-efficient models, alongside test generation and behaviour verification.

3. We maintain Agent.md / Claude.md for global context and task-specific skills. Engineers continuously capture decisions and learnings into these files so the agent improves over time.

4. The engineer raises the PR and remains accountable for what ships. CI/CD helps, but human code review is non-negotiable.

This process evolved through experiments. As our architectural memory compounds, engineers are increasingly comfortable delegating implementation to AI agents. Next, we're moving toward dev containers to ensure identical environments for humans and agents.

-- Mitesh Sharma, Director of Engineering at RudderStack

AI still makes mistakes, especially when faced with too many constraints or detailed specifications. So won't the spec-driven approach backfire?

True, AI is prone to errors and can be overwhelmed by the complexity. Being spec-driven does not mean to abuse the context window unnecessarily. The structured decomposition is the key.

Specify (Start Small) -> Plan (Decompose) -> Generate -> Verify (Human/Test) -> Iterate

Another engineering leader mentioned this

Once we started treating AI as a junior developer rather than a magic box, quality, speed and team confidence all improved.

-- Rehan Ahmed, CTO at Smart Web Agency

You should not treat the AI as a magic box, but as a "junior developer". You give them clear, small tasks, reviewed frequently, and you assume they will make mistakes until proven otherwise. And this can be done while following the spec-driven development.

3. AI test generation is not optional

When requirements are planned and put together as a spec, simultaneously generate the corresponding tests for validation. So that the code is validated immediately after implementation. Accelerating the validation phase improves productivity by a huge margin.

This creates an immediate "traceability matrix," linking every requirement directly to a test case before a single line of code is written.

AI didn't make us 10x faster, it made our hidden assumptions visible. Code is cheap now. Decision quality isn't. The new bottleneck is problem framing, context preservation, and evaluation rigor. We had to redesign our SDLC around traceability and constraint clarity, otherwise AI just scaled confusion.

-- Ritesh Mathur, Co-founder at chetto.ai,

We also need to ensure that when requirements change, the AI identifies exactly which tests need updates. Without an effective AI usage in the automated test generation, it might lead to slowdown because a human will then have to manually find and update the relevant tests repeatedly, which is time-consuming and error-prone.

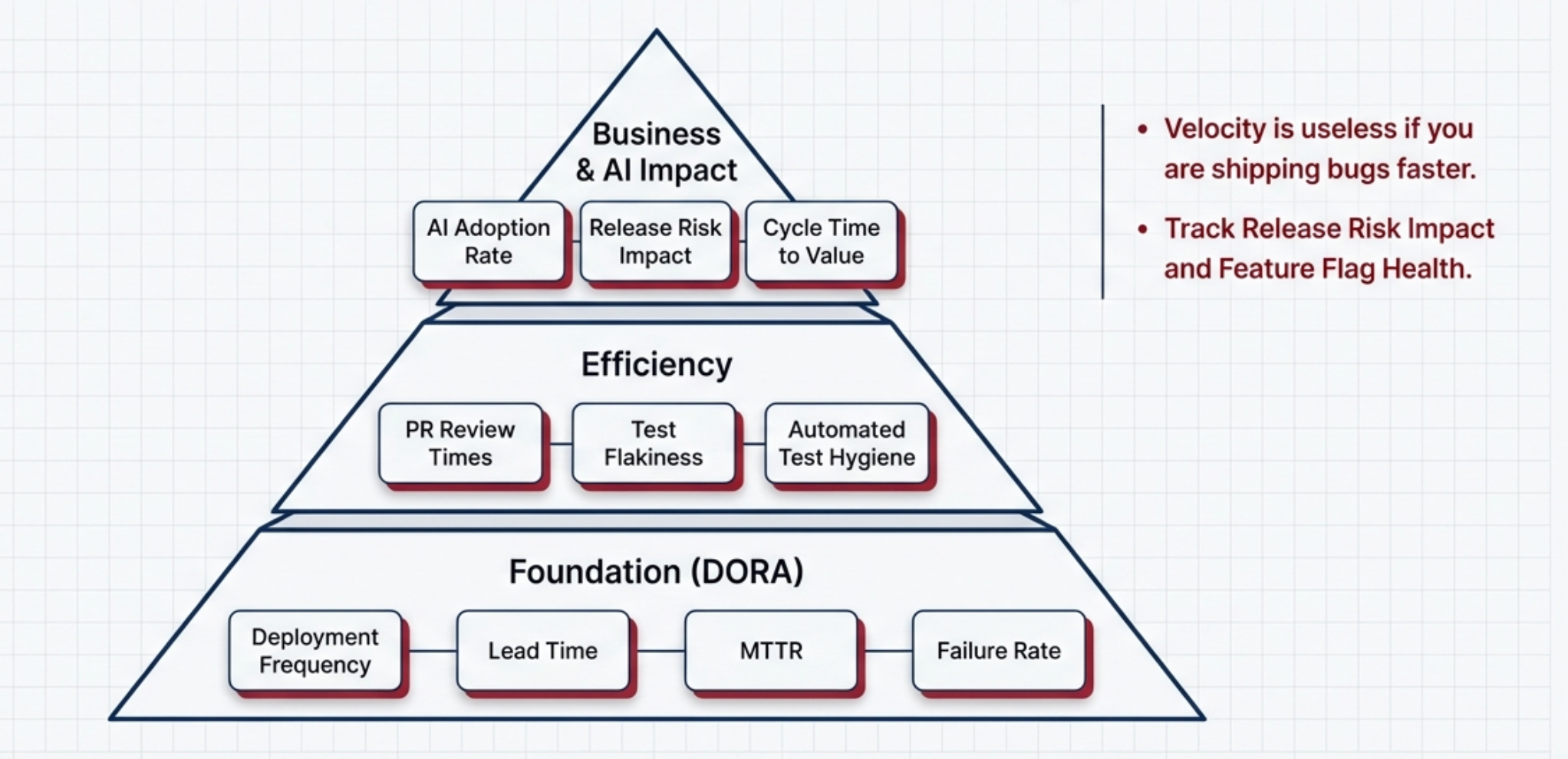

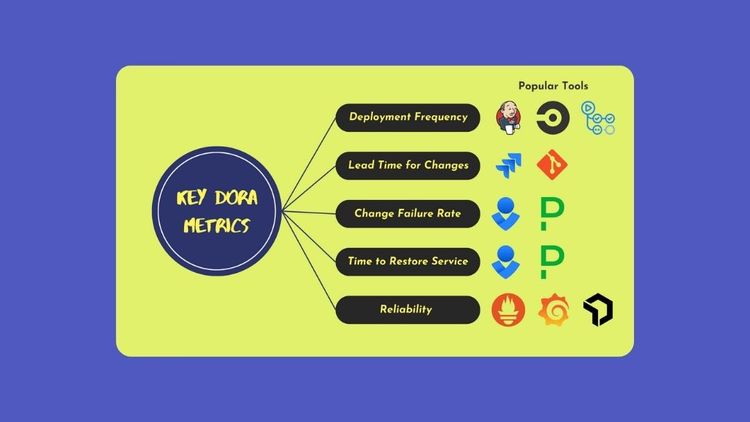

4. Rethink velocity and redefine engineering metrics

Now that we are shipping in days/hours and not weeks, we need to recalibrate how we measure engineering excellence.

Velocity is useless if you are shipping bugs faster

Multiple daily deploys are no longer impressive. The standard is moving toward on-demand instantaneous delivery. Evolution of DORA benchmarks is needed.

5. The evolving role and performance of engineers

Seniority is not defined by the volume of manual code. It is about the ability to provide strong, informed opinions to guide AI outputs.

Senior engineers must be the "context makers" providing the strategic intent of the business.

As Mitesh reflects on his team's journey into AI-native software development

At RudderStack, initially the team was skeptical of using AI in software engineering. We started seeing positive results last year in terms of code quality and engineer performance. Before AI, developers used to get exhausted while writing a lot of code. Now that code generation is fast, developers can focus on making it better. If they don’t like something, they can rewrite it faster. The developer is always accountable for the code they ship using AI. Developers are always in the loop and will always be there, but we see their role changing from writing code to guiding AI, designing guidelines, and building pipelines.

-- Mitesh Sharma, Director of Engineering at RudderStack

When AI is this capable, especially with the Claude Opus 4.6 and GPT 5.3 Codex models, will the engineering managers still be relevant?

Jevons Paradox of economics states that when a resource becomes more efficient to use, its consumption often increases. One example is the rise in consumption of coal after the Watt steam engine greatly improved the efficiency of the coal-fired steam engine. We are seeing a similar story here.

The reduced cost and effort of software development because of AI, are leading to an increased demand for software, rather than a reduction in overall development activity

This increased demand will not only require new AI tooling but also the talented engineering managers who can continue to innovate and structure the new software development lifecycle. The Invide job feed shows that engineering management roles are increasing, while developer roles are decreasing, and the nature of the roles has changed significantly.

Conclusion

When the execution phase of a feature effectively collapses from days to hours, the 14-day cycle becomes an artificial delay. In this article, I analyzed how AI is changing the software development lifecycle and how engineering managers can navigate/capitalize these changes. And it starts by letting go of the old constraints like two-week sprint.

The teams that redesign their lifecycle around architectural memory, traceability, and constraint clarity will compound speed. The rest will just ship confusion faster.

This essay does not end here. Share your opinions via comments or DM and I will incorporate them in the essay (of course, I will credit where it's due).

References

This essay wouldn't have been possible without 35+ Engineering Managers who generously shared their time and insights through interviews and email.

Following papers helped me speed up my research.

- A Theoretical and Practical New Methodology -https://arxiv.org/abs/2408.03416

- Spec-Driven Development: From Code to Contract - https://arxiv.org/abs/2510.03920

- Exploring the Impact of Generative Artificial Intelligence on Software Development in the IT Sector: Preliminary Findings on Productivity, Efficiency and Job Security. University of Gdansk. - https://arxiv.org/abs/2508.16811

- Why Does the Engineering Manager Still Exist in Agile Software Development? International Journal of Software Engineering & Applications - https://doi.org/10.5121/ijsea.2025.16502

- Engineering Ethics and Management Decision-Making. International Journal of Innovative Science and Research Technology (IJISRT) - https://doi.org/10.38124/ijisrt/IJISRT24MAY1683

Member discussion